Choosing between 2D and 3D vision systems for collaborative robots fundamentally depends on the complexity of the parts, their presentation, and the required precision. We integrate 2D vision when objects are flat, clearly separated, and their position and orientation are primarily determined by X and Y coordinates. This setup typically suits applications like quality inspection of labels or verifying component presence on a tray. For randomly presented or stacked items, or when depth perception is critical for grasping and collision avoidance, we specify 3D vision systems. These systems capture depth information, allowing the cobot to recognise objects in three dimensions (X, Y, Z) and calculate their orientation more accurately, which is essential for bin picking or precise assembly tasks.

Understanding Cobot Vision Systems - Key Factors

When we evaluate a vision system for a cobot application, we focus on 3 key factors that differentiate 2D and 3D capabilities. The primary distinction lies in dimensional data: 2D vision captures a flat image, while 3D reconstructs a volumetric scene. This difference drives cost, integration complexity, and ultimately, the range of tasks a cobot can perform. For example, a simple pick-and-place from a conveyor where components are always fed in the same orientation usually only needs 2D vision. However, handling parts from a bin where items are jumbled requires the depth information only a 3D system can provide for successful grasping.

2D Vision Systems

Our experience shows 2D vision excels in structured environments. These systems use standard cameras to capture images, identifying objects based on contrast, colour, and geometric shapes in a two-dimensional plane. They are cost-effective, with typical price ranges between £5,000 and £10,000, not including integration. Setup is faster for straightforward applications, often involving a single overhead camera and basic lighting. We frequently deploy 2D vision for tasks such as quality control checks (e.g., verifying a cap is present), part inspection, or picking items from a fixed grid, where the object’s height is consistent or irrelevant. The main limitation is their inability to perceive depth, meaning they cannot differentiate between objects stacked on top of each other or correctly identify items presented at varying heights.

3D Vision Systems

For less structured scenarios, we specify 3D vision systems. These systems use 3 technologies like structured light, stereo vision, or time-of-flight to generate a point cloud or depth map, providing X, Y, and Z coordinates for each point in the scene. This enables them to perceive volume, orientation, and spatial relationships. While the acquisition cost is higher, ranging from £8,000 to £25,000 for the camera and software, the capability increase is significant. We 3D vision for complex tasks like bin picking, where parts are randomly oriented within a container, or for precise assembly operations that demand accurate part alignment in all three axes. A prime example is the Pickit 3D URCap, a system we frequently integrate, which can detect parts in under 1 second and is effective for bin picking up to 300 mm depth. Initial integration complexity for 3D systems is medium, requiring CAD model uploads or teach-by-showing methods, along with careful lighting optimisation.

Vision System Comparison

We've compiled a brief comparison of the core attributes for both 2D and 3D vision systems to highlight their practical differences:

| Attribute | 2D Vision Systems | 3D Vision Systems | Why It Matters |

| Data Captured | X, Y coordinates, Colour, Intensity | X, Y, Z coordinates (depth), Volume, Orientation | Determines ability to perceive height and spatial relationships. |

| Typical Cost | £5,000 - £10,000 (system only) | £8,000 - £25,000 (system + software) | Budget implications; 3D costs more for increased capability. |

| Integration Complexity | Low to Medium | Medium to High | 3D often requires more sophisticated calibration and software configuration. |

| Ideal Scenarios | Pick from organised trays, quality inspection, pattern recognition, barcode reading | Bin picking, depalletising, precise assembly, random part acquisition, varying product heights | Matches the system to the structural complexity of your work environment. |

| Perception | Planar (flat) | Volumetric (depth and shape) | Crucial for handling jumbled parts or collision avoidance in tight spaces. |

| Typical Speed | Often faster for simple detection | Detection time under 1 second for many parts (e.g., Pickit) | Speed can vary significantly based on task complexity and processing power. |

| Lighting Needs | Often simpler, can be sensitive to shadows | Can be more tolerant to varying light, but specific lighting still critical for optimal performance | Affects environmental setup and robustness of detection. |

Source: Olympus Technologies project experience and manufacturer specifications.

Examples

We deploy both 2D and 3D vision depending on the specific manufacturing challenge. For instance, in a recent project involving quality inspection of blister packs in the pharmaceutical sector, we opted for a 2D vision system. The client needed to verify the presence of pills and correct packaging orientation. Since all items presented on a flat conveyor, the X and Y coordinate data from a 2D camera was sufficient and cost-effective. The integration was straightforward, with the system quickly identifying anomalies with an accuracy rate exceeding 99.8%.

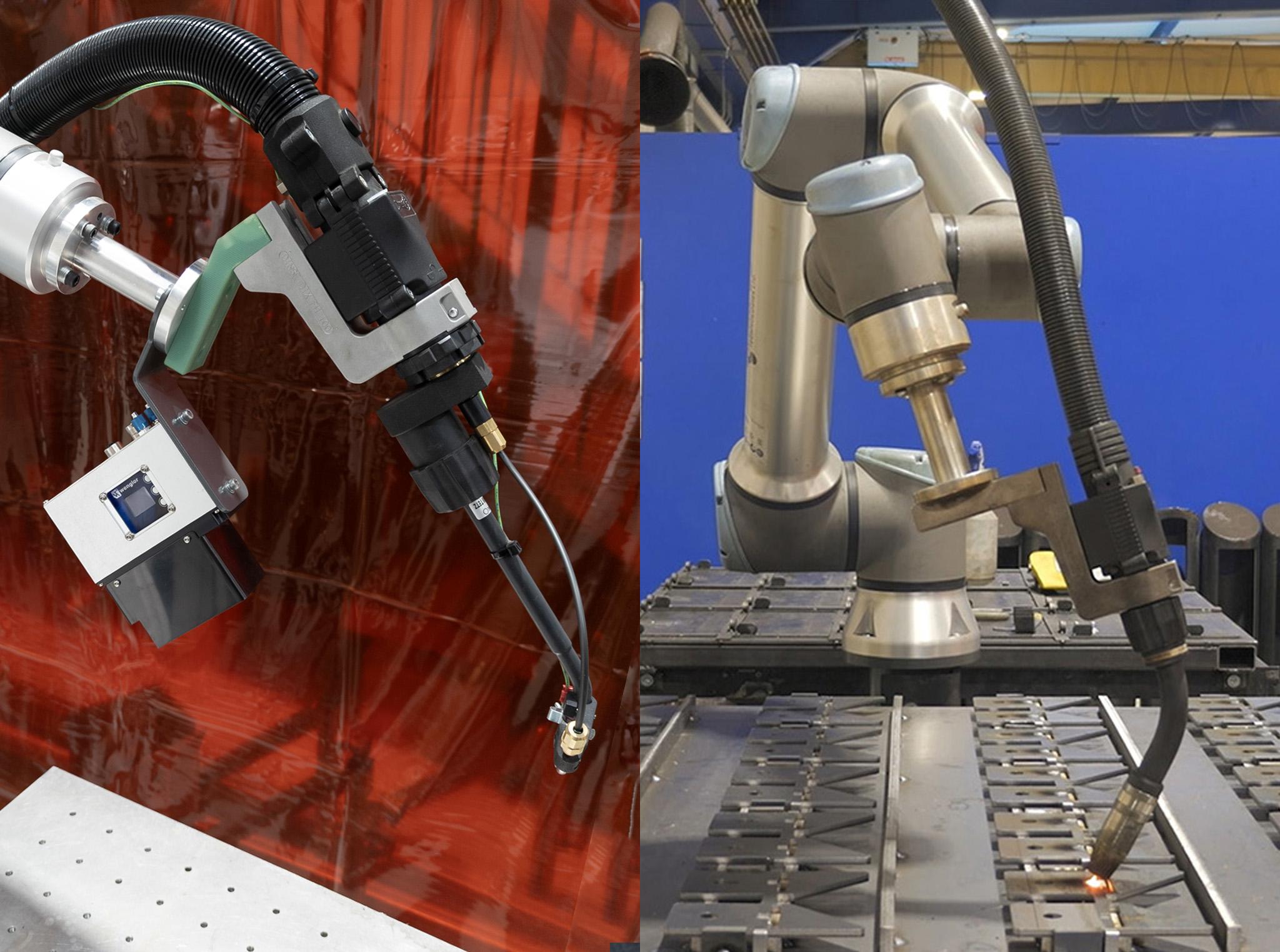

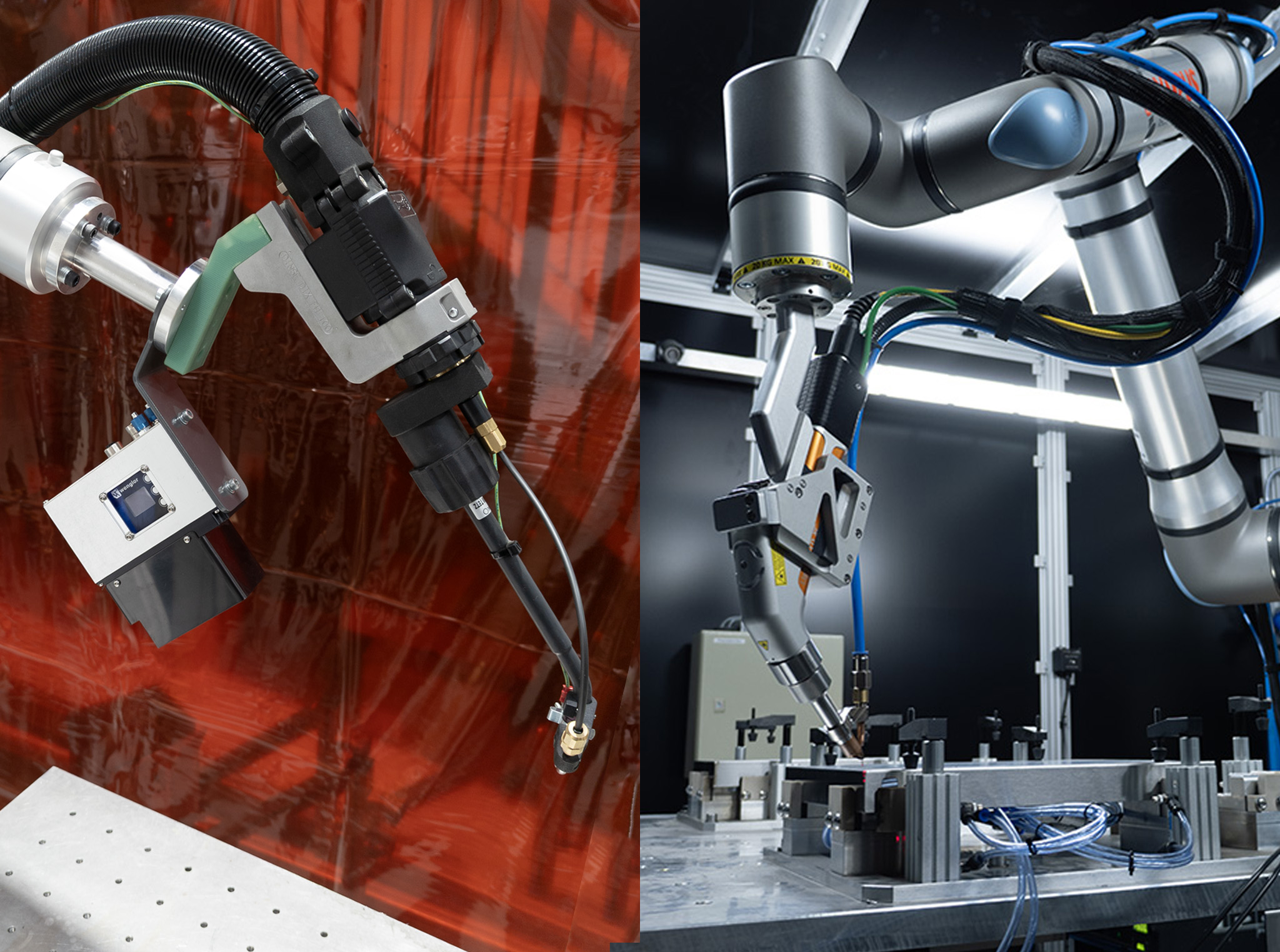

Conversely, for an automotive component manufacturer, we implemented a 3D vision system for bin picking. The task involved a UR10e cobot picking irregularly shaped metal castings from a large bin and placing them onto a CNC machine. The castings were randomly placed, creating a jumbled pile. Here, a 2D system would be ineffective; it lacks the depth perception to guide the gripper to the correct part without collisions and accurately determine the part's orientation for successful gripping. The 3D vision system, integrated with a bespoke gripping solution, enabled the cobot to reliably pick parts from varying depths, significantly reducing the cycle time for machine tending compared to manual loading.

What Changes the Answer

The choice between 2D and 3D vision extends far beyond simply whether a part is flat or jumbled; it touches on project budget, required flexibility, and the long-term strategic goals for automation. While a 2D system offers a lower initial investment, typically £5,000-£10,000 for the camera, underestimating future expansion results in early obsolescence. We’ve seen projects where manufacturers initially chose 2D for a simple pick-and-place, only to realise six months later they needed to handle the same part from a new, less organised bin, necessitating a complete vision system overhaul. That specific scenario adds 30-40% to the total project cost compared to specifying 3D upfront if there’s a clear path to randomisation.

Considering the application's rigidity is paramount. If part presentation is guaranteed to remain consistent – always in the same place, facing the same direction, on a flat surface – 2D vision remains a reliable and cost-efficient choice. However, even minor deviations, such as parts occasionally overlapping or being slightly tilted, introduce significant error rates or require extensive human intervention with 2D setups. The added tolerance of 3D vision means fewer production stops for human correction.

We also find that the physical environment itself influences the decision. Simple lighting conditions make 2D vision viable, but fluctuating natural light, reflections from shiny surfaces, or ambient factory dust and grime degrade 2D performance much faster than 3D systems. 3D vision, particularly structured light systems, often compensates better for challenging lighting, glare, or even dusty environments.

When Payload Ratings Don't Tell the Full Story

The decision also impacts the cobot's payload and reach requirements, particularly for bin picking applications. A 3D vision system that precisely locates a part allows for a lighter, more agile gripper, as it does not need to compensate for large positional uncertainties. This frees up payload capacity for a heavier part or allows for a smaller, less expensive cobot model like a UR10e rather than a UR20. Understanding these downstream impacts during the initial assessment is how Olympus Technologies ensures a future-proof solution.

The Certification Gap in Collaborative Welding

Although vision systems are primarily used for material handling and inspection, their role in welding applications is growing, especially within real-time seam tracking. Here, the distinction between 2D and 3D becomes critical for quality and safety. While 2D systems can track a weld seam along a flat plane, 3D vision is necessary for tracking variable gap sizes or inconsistent joint preparations, which are common in real-world fabrication. This impacts not only weld quality but also influences how we conduct risk assessments and ensure compliance with safety standards such as ISO 10218-1/2, especially when dealing with arc flash zones or laser welding.

Related Pages

We've detailed how vision systems integrate into various facets of automation. Explore these related guides and services:

- What are Vision Systems for Cobots?: A foundational guide to the role of machine vision in collaborative automation.

- Cobot Palletising: Discover how vision systems enhance efficiency and accuracy in automated palletising operations, improving throughput from 8 to 15 cycles per minute.

- Cobot Welding: Learn about vision-guided arc and laser welding, where precise part location is crucial for achieving high-quality welds at speeds up to 800 mm/min.

- Machine Tending: See how vision provides guidance for loading and unloading parts into CNC machines, with typical cycle times of 15-45 seconds.

- Bespoke Gripping Solutions: Vision systems often inform the design of custom end-of-arm tooling, ensuring the gripper matches the part's presentation.

FAQs

What is the typical pricing for vision systems for cobots?

Vision systems range from around £5,000 for basic 2D setups to £25,000 for advanced 3D systems, including camera hardware and software licences. This does not include integration costs specific to your application's complexity.

Can 2D vision be upgraded to 3D later?

While some components are reusable, upgrading from a pure 2D system to a 3D system typically involves acquiring new camera hardware, new software, and a significant amount of re-integration and testing. For instance, a Pickit 3D URCap system, which we often integrate, requires a new investment in both the specific camera and the software licence. We always recommend considering future flexibility from the outset to avoid costly overhauls.

What are the main limitations of 2D vision in cobot applications?

The core limitation of 2D vision is its inability to perceive depth. This means it cannot handle randomly piled parts (bin picking), objects that vary in height, or scenarios where precise Z-axis information is needed for collision avoidance or intricate assembly. It also struggles with overlapping parts or variable lighting conditions that create shadows.

When is 3D vision an overkill?

3D vision can be overkill for applications where parts are consistently presented in a known, flat orientation, such as picking items from a pre-loaded jig, quality inspection of flat components, or reading barcodes on boxes moving on a conveyor. In these cases, 2D vision provides all the necessary information at a lower cost and with simpler integration.

Next Steps

Deciding on the right vision system for your cobot doesn't have to be complex. At Olympus Technologies, we specialise in understanding your specific manufacturing challenges and recommending the most effective, reliable, and future-proof solutions.